Splat Feature Solver: Fast and Provable Feature Lifting for 3D Scene Understanding

ICLR 2026 Submission

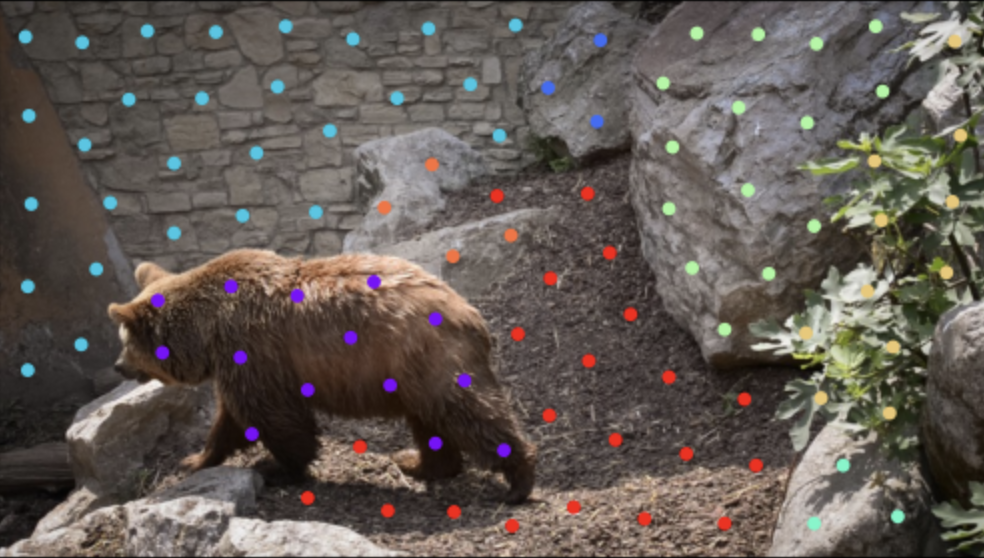

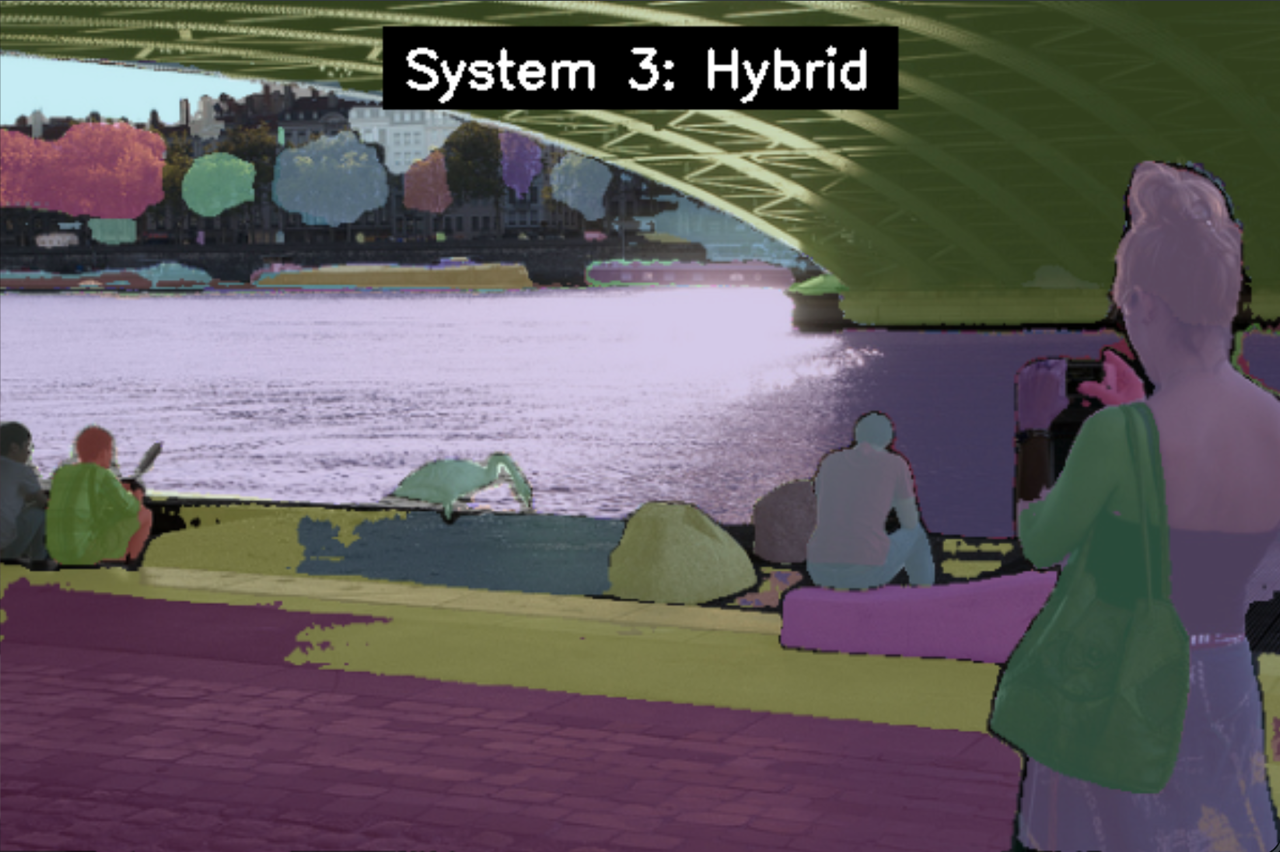

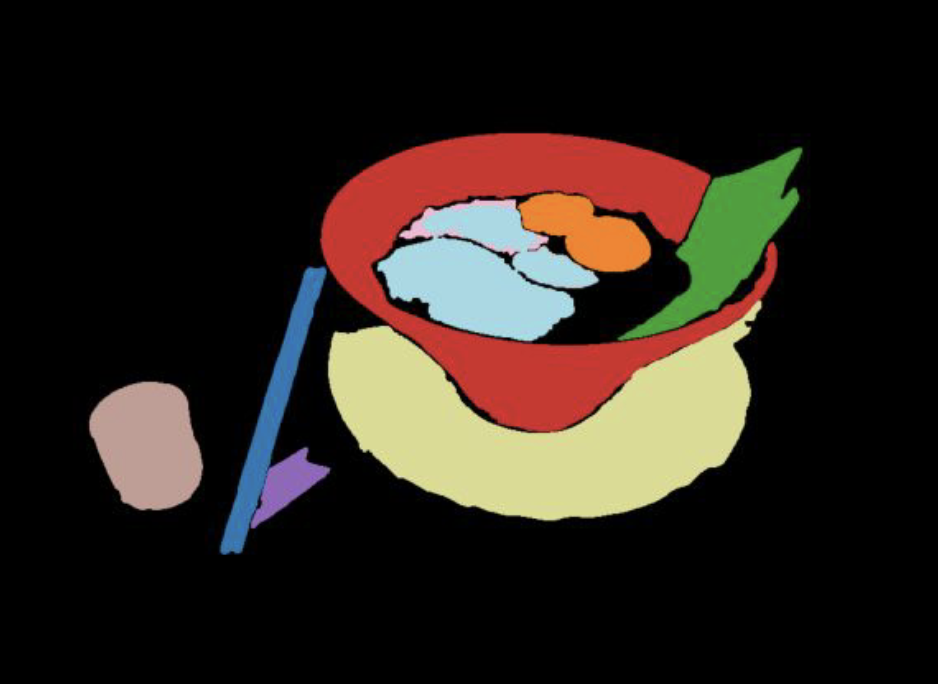

We introduce a unified and efficient approach to feature lifting, enabling rich image descriptors like CLIP to be attached to splat-based 3D representations in mere minutes. Our method formulates the lifting task as a sparse linear inverse problem with provable error bounds. [PDF]